Staffing Model Redesign

Customs and Border Protection maintains a staff of tens of thousands of officers at facilities all over the country and in a few places overseas. Our company was contracted to reverse engineer and redesign the spreadsheet tool that was being used to justify the roughly 27,000 officers used to staff the nation's land, sea, and air Port of Entry facilities. I was assigned to do the reverse-engineering and specify the features the new system would need, subject to the customer's request that it "not look like a spreadsheet." I spent quite a bit of time in the CBP office in the Reagan Building working with the individual who had developed and maintained the spreadsheet over several years. He was a diligent and thoughtful guy but didn't have a background in handling data, building in consistent self-checks, and maintaining a clear picture of what updates were getting done every year.

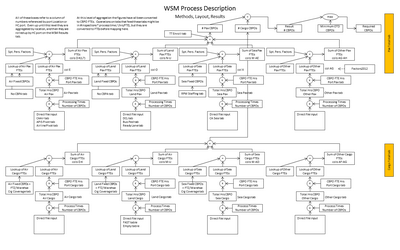

The idea behind the spreadsheet was that if you knew the total number of activities of each kind performed at each port, how long each activity took, on average, the number of officers it took to do it, and the claims on each officer's time for overhead activities like vacation, sick leave, training, and personal logistics then it should be possible to calculate how many officers would be needed to carry out all of those operations. There were also considerations for management and support personnel, provisions for yearly traffic volumes based on assumptions about overall trends and specific knowledge of new and modified facilities, and a few other kickers.

I documented the workings of the existing spreadsheet in excruciating detail, during which time I found some broken references, errors, and omissions that I helped the staffer correct. I then specified a set of requirements for what a replacement system would have to do, incorporating many of the customer's requests for things like online dashboards, user and editing control, and graphical output.

The software architect developed a system that allowed a user to specify how input data was to be read from input files (e.g., what internal variable a data item gets assigned to based on the header value for that column of data in an input file) and also a set of functions and mathematical operations that replicated the calculations performed in the spreadsheet. This was all done in an interactive, modular way that replicate the ease of use of a spreadsheet while automating the handwork and adding tracking and status information. It was this project I had in mind, along with an older one I wrote, when I described how systems should incorporate sufficient meta-data where appropriate.

I also included specifications for user interface features that would greatly enhance the ease of editing and verifying the large data set, automatically connect to agency databases in order to pull yearly data and continually verify that the data definitions had not changed , maintain contact information to the people that provided all of the inputs, keep track of which inputs had been updated each year and to automatically send out update requests, look for anomalies in the data, specify special calculations based on location-specific circumstances, and allow managers to sign off on various operations.

I served as the go-between, in Scrum terms as the Product Owner, for various representatives of the customer and our in-house technical team. I presented some of the software updates and collected feedback from the users and managers of the system. I worked with agency staff to resolve inconsistencies in each year's source data since different reports of the same activity did not yield the same results. I found this out because the base system was meant to calculate static requirements for port personnel. Not only did this not take seasonality into account, it also didn't consider the issues associated with actually staffing ports on an hour-to-hours basis as daily traffic ebbs and flow, we were also charged with incorporating a basic discrete-event simulation capability that would simulate operations at a port during the day. When we compared the hourly data with the yearly data we found that the results did not agree all that well, so we tried to trace all of the inputs back to their source so we would understand where the difference would arise.

I specified a bunch of internal data mechanisms and ways to efficiently fire off the daily (and weekly) simulations on an automated basis to that columns of output data could be populated at the touch of a button. The company hired a consultant to begin introducing us to the Scrum process, a formalization of the iterative, Agile processes we always used anyway, but it wasn't taken much beyond a few stand-up meetings and sticky notes on a wall. Once the system was developed I found ways to populate a test spreadsheet with the output from the original spreadsheet and the new staffing model's static outputs to see how well they matched. I had to create a few extra columns and some custom calculations in the new tool but eventually I got the 200-ish values from each of the 500+ locations to match up to several decimal places, thus proving that the system met the original requirements.