A Simulationist's Framework

for Business Analysis

Part 08:

Data

How To Get It and How To Use It

R.P. Churchill

CBAP, IIBA-CBDA, PMP, CSPO, CSMLean Six Sigma Black Belt

www.rpchurchill.com/presentations/BAseries/08_Data www.rpchurchill.com | Portfolio | Presentations

30 Years of Simulation

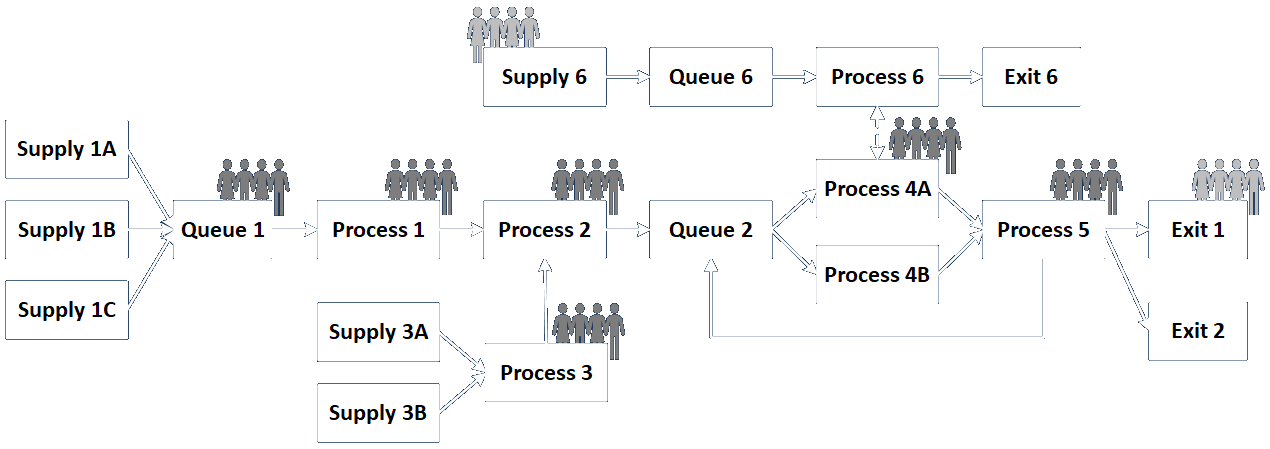

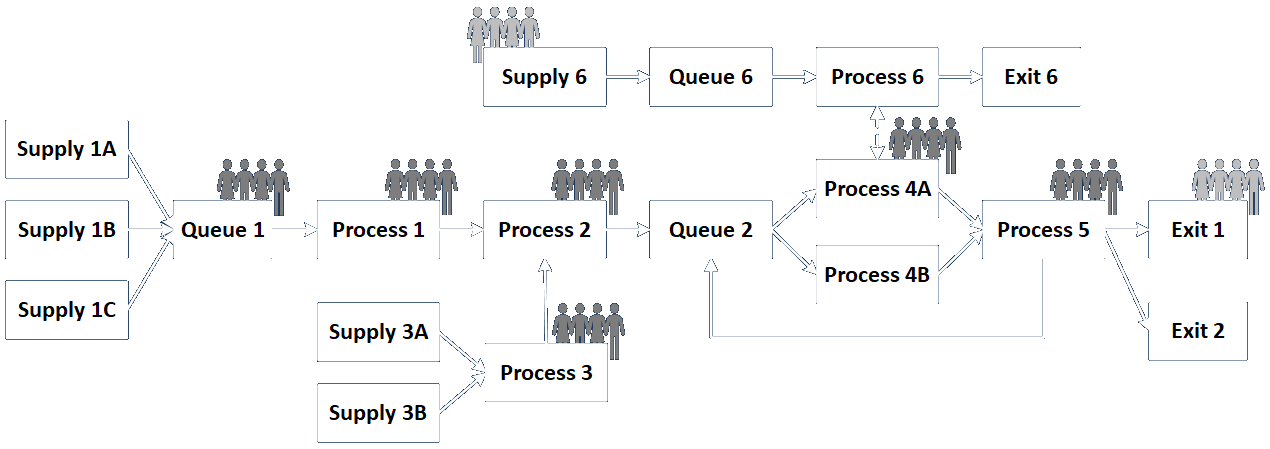

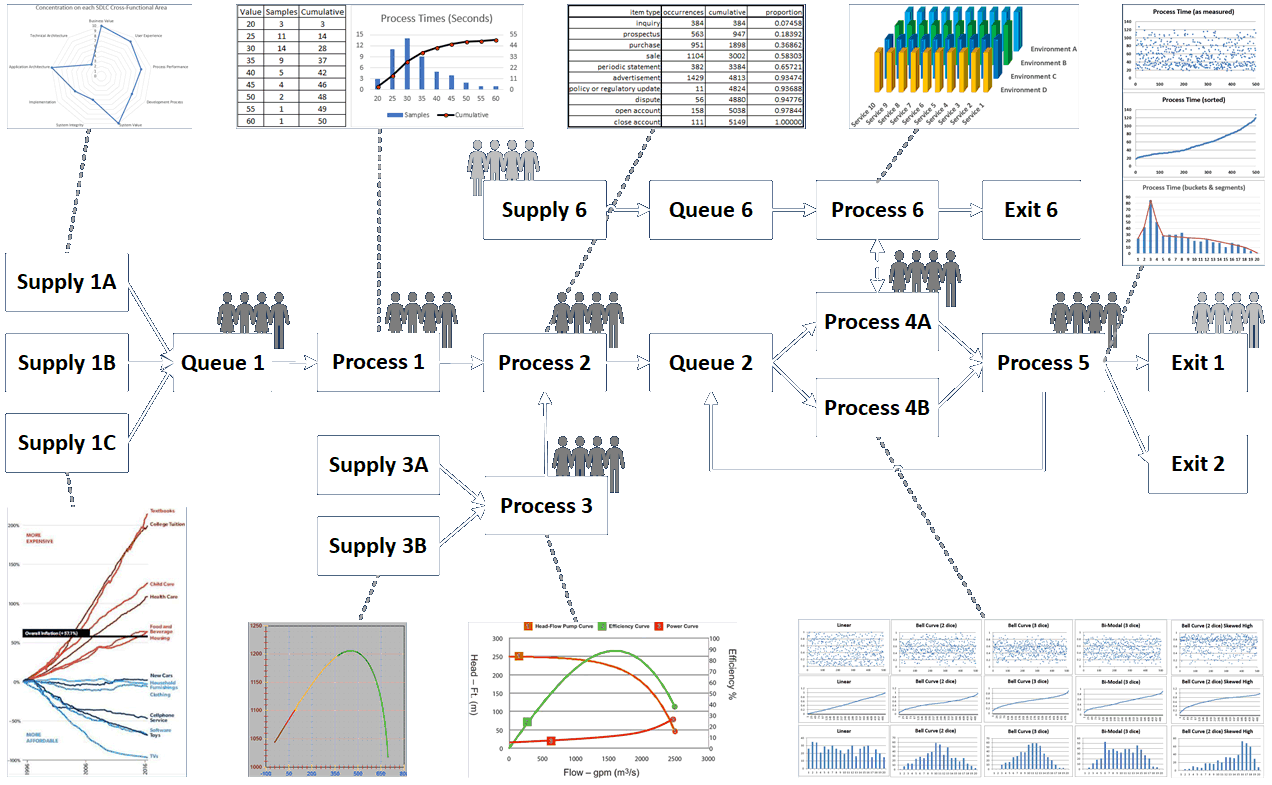

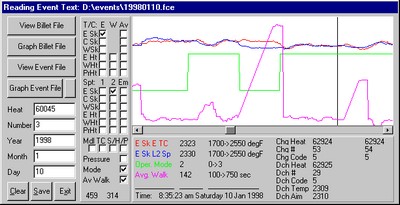

Continuous simulation of the heating of a square billet and Discrete-Event simulation of a multi-phase process.

30 Years of Simulation

|

Industries

|

|

|

Previous Talks About Data

Our friend Richard Frederick gave an excellent series of twenty talks on data from last August through this January, and I highly encourage you to review them at your convenience.

I've done this for a long time and I learned a lot from him.

The videos and PDFs from his talks may be found here.

The Framework:

- Project Planning

- Intended Use

- Assumptions, Capabilities, Limitations, and Risks and Impacts

- Conceptual Model (As-Is State)

- Data Sources, Collection, and Conditioning

- Requirements (To-Be State: Abstract)

- Functional (What it Does)

- Non-Functional (What it Is, plus Maintenance and Governance)

- Design (To-Be State: Detailed)

- Implementation

- Test

- Operation, Usability, and Outputs (Verification)

- Outputs and Fitness for Purpose (Validation)

- Acceptance (Accreditation)

- Project Close

The Framework: Simplified

Intended Use

Intended Use Conceptual Model (As-Is State)

Conceptual Model (As-Is State) Data Sources, Collection, and Conditioning

Data Sources, Collection, and Conditioning Requirements (To-Be State: Abstract)

Requirements (To-Be State: Abstract)- Functional (What it Does)

- Non-Functional (What it Is, plus Maintenance and Governance)

Design (To-Be State: Detailed)

Design (To-Be State: Detailed) Implementation

Implementation Test

Test- Operation, Usability, and Outputs (Verification)

- Outputs and Fitness for Purpose (Validation)

Customer Feedback Cycle

Agile is Dead (in a rigorously formal sense)

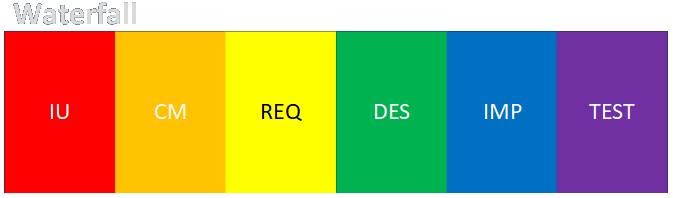

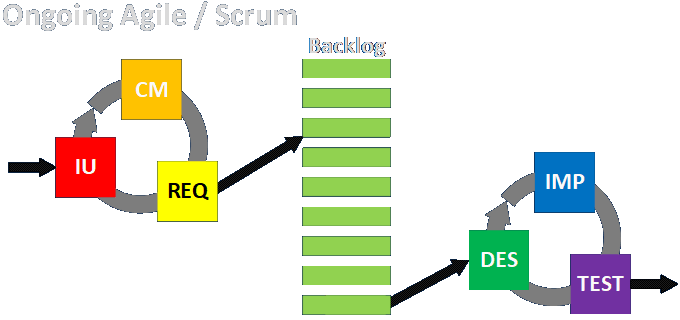

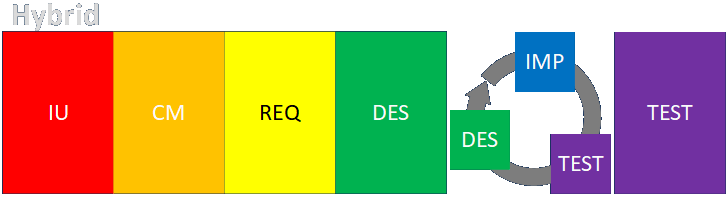

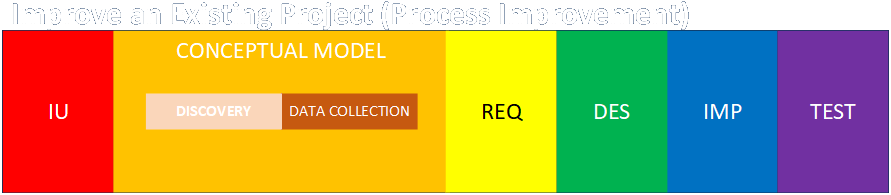

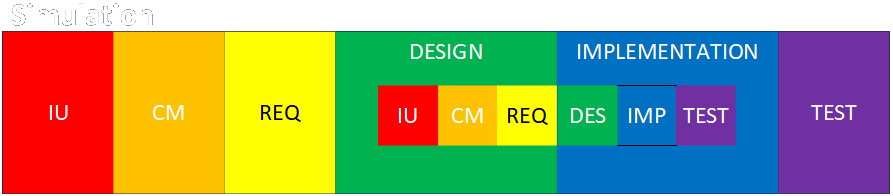

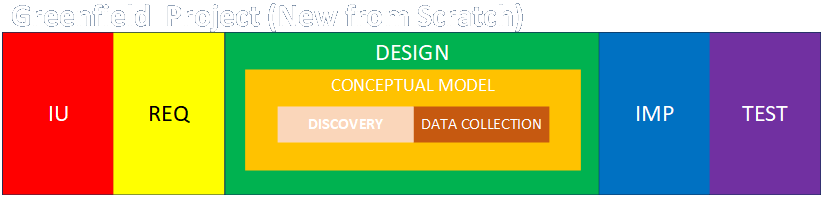

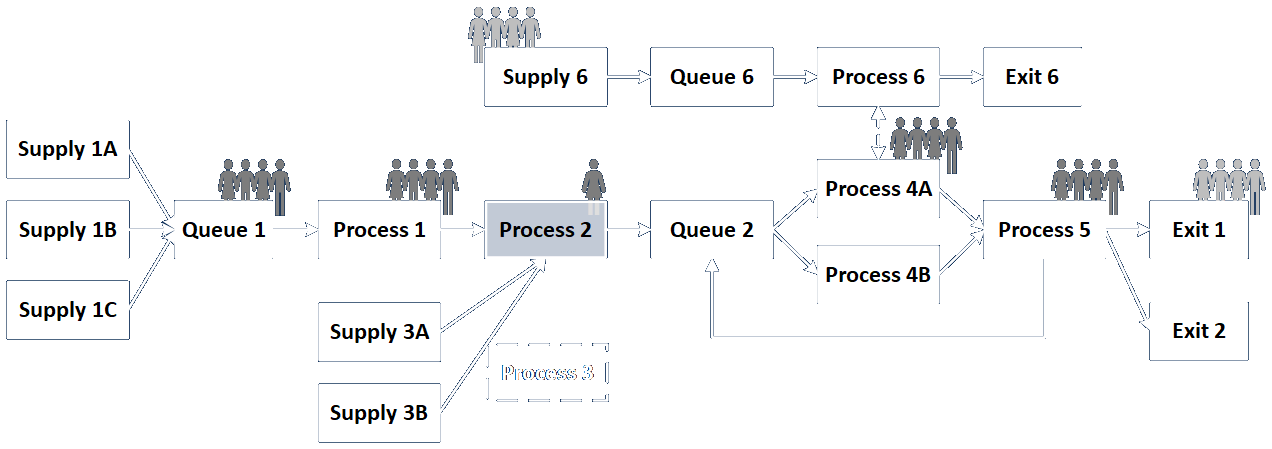

Basic Engagement Structures

Link to detailed discussion.

Engagement Structure Variations

Link to detailed discussion.

Engagement vs. System vs. Solution

The engagement is what we do to effect a change that serves customers.

The system is what we analyze and either build or change to serve customers.

The solution is the change we make to serve customers.

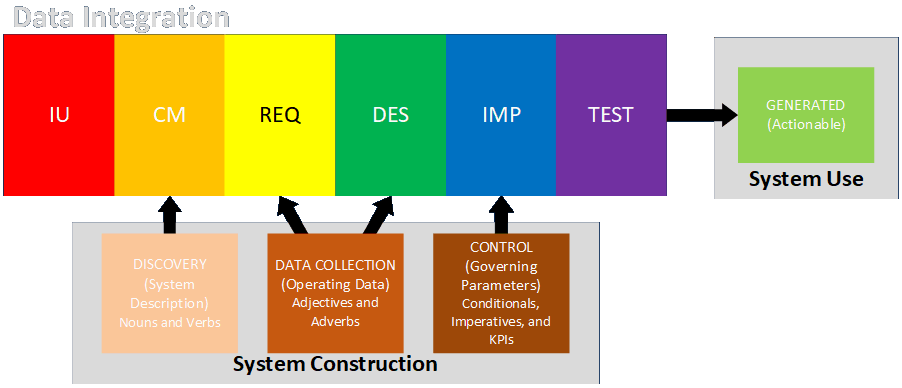

Data Is Always Part of the Engagement

- System Description: data that describes the physical or conceptual components of a process (tends to be low volume and describes mostly fixed characteristics)

- Operating Data: data that describe the detailed behavior of the components of the system over time (tends to be high volume and analyzed statistically)

- Governing Parameters: thresholds for taking action (control setpoints, business rules, largely automated or automatable)

- Generated Output: data produced by the system that guides business actions (KPIs, management dashboards, drives human-in-the-loop actions, not automatable)

Links to detailed discussions.

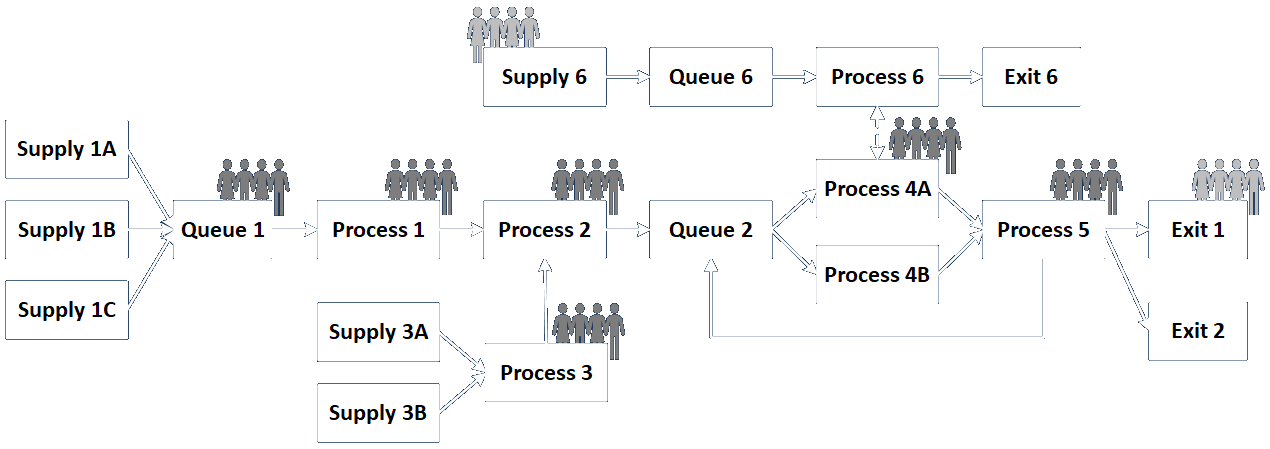

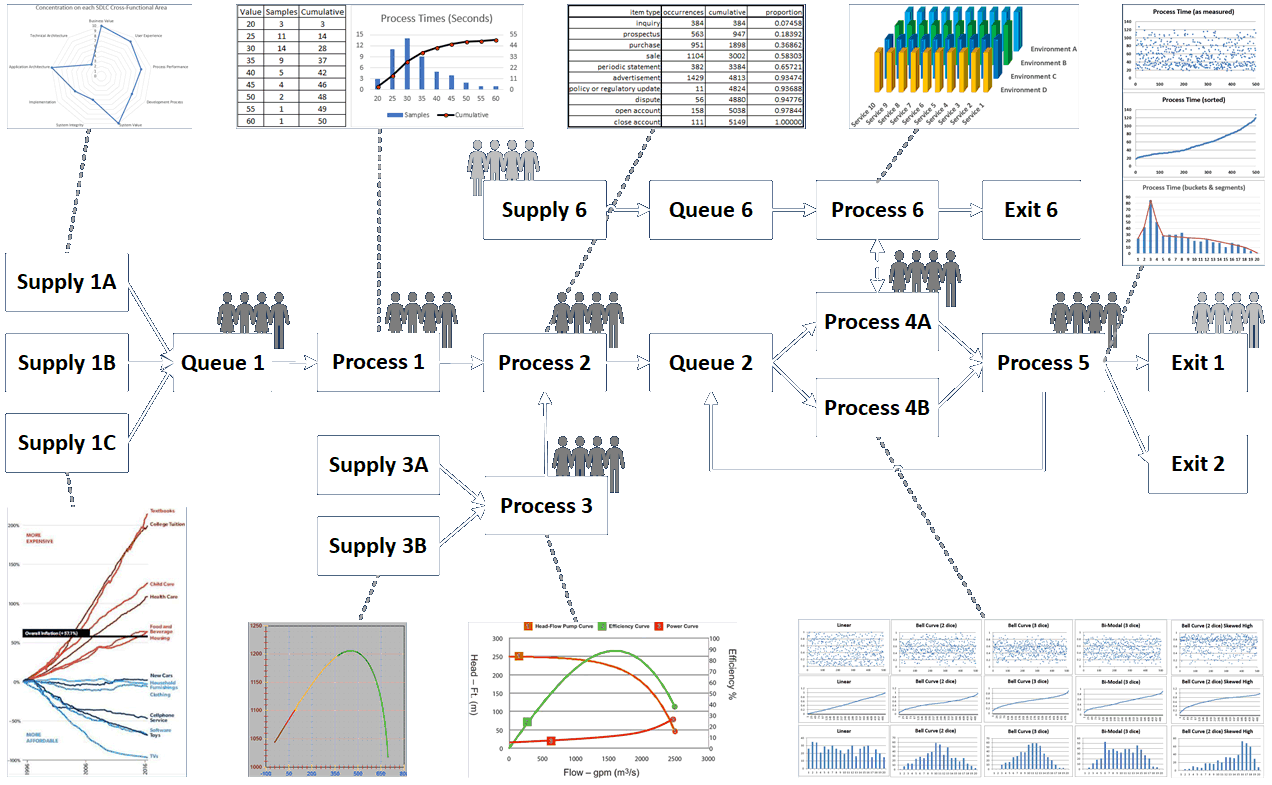

Data Goes In and Out of Every System Component

There are contexts for each situation.

Discovery vs. Data Collection

Discovery is a qualitative process. It identifies nouns (things) and verbs (actions, transformations, decisions, calculations).

|

It's how you go from this... |

...to this. |

|

|

|

|

Discovery comes first, so you know what data you need to collect.

Elicitation is discovering the customer's needs. Discovery is about mapping the customer's existing process — or — it's what you design by working back from the desired outcomes.

Link to detailed discussion.

Discovery vs. Data Collection (continued)

Data Collection is a quantitative process. It identifies adjectives (colors, dimensions, rates, times, volumes, capacities, materials, properties).

|

It's how you go from this... |

...to this. |

|

|

|

|

Think about what you'd need to know about a car in the context of traffic, parking, service (at a gas station or border crossing), repair, insurance, design, safety, manufacturing, marketing, finance, or anything else.

Methods of Discovery

|

|

It doesn't happen by accident!

Methods of Data Collection

|

|

Classes of Data

- Measure: A label for the value describing what it is or represents

- Type of Data:

- numeric: intensive value (temperature, velocity, rate, density – characteristic of material that doesn't depend on the amount present) vs. extensive value (quantity of energy, mass, count – characteristic of material that depends on amount present)

- text or string value: names, addresses, descriptions, memos, IDs

- enumerated types: color, classification, type

- logical: yes/no, true/false

- Continuous vs. Discrete: most numeric values are continuous but counting values, along with all non-numeric values, are discrete

- Deterministic vs. Stochastic: values intended to represent specific states (possibly as a function of other values) vs. groups or ranges of values that represent possible random outcomes

Link to detailed discussion.

Classes of Data (continued)

- Possible Range of Values: numeric ranges or defined enumerated values, along with format limitations (e.g., credit card numbers, phone numbers, postal addresses)

- Goal Values: higher is better, lower is better, defined/nominal is better

- Samples Required: the number of observations that should be made to obtain an accurate characterization of possible values or distributions

- Source and Availability: where and whether the data can be obtained and whether assumptions may have to be made in its absence

- Verification and Authority: how the data can be verified (for example, data items provided by approved individuals or organizations may be considered authoritative)

Link to detailed discussion.

Required Sample Size

There are many forms of this (rather chicken-and-egg) equation, but this one is fairly common:

n >= (z ⋅ σ / MOE)2

where:

n = minimum sample size (should typically be at least 30)

z = z-score (e.g., 1.96 for 95% confidence interval)

σ = sample standard deviation

MOE = measure of effectiveness (e.g., difference between sample and population means in units of whatever you're measuring)

Document all procedures and assumptions!

Link to detailed discussion.

Conditioning Data

Complete data may not be available, and for good reason. Keeping records is sometimes rightly judged to be less important than accomplishing other tasks.

Here are some options* for dealing with missing data (from Udemy course R Programming: Advanced Analytics In R For Data Science by Kirill Eremenko):

- Predict with 100% accuracy from accompanying information or independent research.

- Leave record as is, e.g., if data item is not needed or if analytical method takes this into account.

- Remove record entirely.

- Replace with mean or median.

- Fill in by exploring correlations and similarities.

- Introduce dummy variable for "missingness" and see if any insights can be gleaned from that subset.

* These mostly apply to individual values in larger data records.

Document all procedures and assumptions!

Conditioning Data (continued)

Data from different sources may need to be regularized so they all have the same units, formats, and so on. (This is a big part of ETL efforts.)

Sanity checks should be performed for internal consistency (e.g., a month's worth of hourly totals should match the total reported for the month).

Conversely, analysts should be aware that seasonality and similar effects mean subsets of larger collections of data may vary over time.

Data items should be reviewed to see if reporting methods or formats have changed over time.

Data sources should be documented for points of contact, frequency of issue, permissions and sign-offs, procedures for obtaining alternate data, and so on.

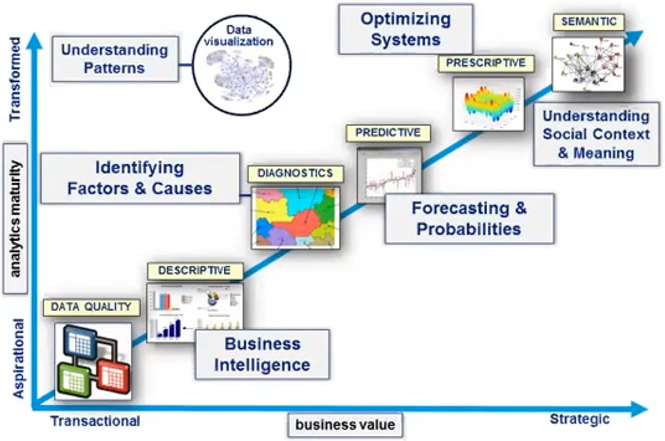

Handling of Data Gets More Abstract Over Time

...as data is becoming more voluminous, and as what can be done with it is more powerful and valuable.

This presentation and other information can be found at my website:

E-mail: bob@rpchurchill.com

LinkedIn: linkedin.com/in/robertpchurchill